Movable Type System Architecture Guide

This document is meant to illustrate a number of different system configurations for your blogging network. It is a guide to help inform your own architecture according your own specific requirements and resource constraints.

This document is structured to illustrate the simplest way to architect your blogging network up front and then how to iteratively increase the complexity of your system in order to best meet your specific budgetary and operational requirements.

Simple Architecture

The simplest multi-machine architecture is very simple to design and setup. In this configuration one machine is dedicated to serving pages and processing comments. It is meant to be accessed by the readers of your blog. The second machine’s sole purpose is to service users authoring content, moderating comments and performing other administrative functions.

Comment processing is separated from the primary application to insulate the application server from the load generated by spikes in comment and TrackBack traffic.

Advantages

- App server latency is minimized and insulated from traffic spikes and loads on the public web server.

Risks and Mitigations

- Comment loads can impact page load performance and vice-versa. To mitigate this server can be virtualized to be tuned and optimized independently.

Simple Architecture Optimized for Large Reader Base

Utilizing three machines in your blogging system architecture gives you a tremendous amount of flexibility in terms of design, and it allows you to design a system that is capable of scaling to more specific requirements and meet a wider array of use cases.

Separate Page Server and Comment Server

In a majority of circumstances a single web server serving static HTML content can scale to meet an extraordinary demand. However, popular web sites may need to insulate themselves further from the degraded performance that may result from popular posts, comment spam attacks and other sources of peak loads. The following architecture recommendation extends the previous architecture by processing comments and serving pages from dedicated machines.

Advantages

- Provides a higher degree of certainty that readers will still be able to access published content, even when experiencing intense comment and TrackBack loads.

Risks and Mitigations

- This setup is not capable of servicing a large number of commenters. To mitigate, consider load balancing within a Page and Comment Server Cluster (see below).

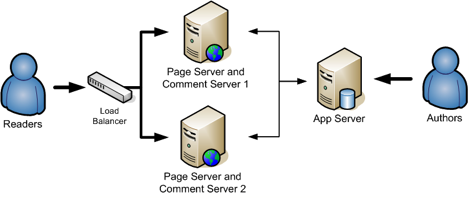

Load Balanced Page and Comment Server Cluster

With higher end software it is possible to service a greater number of active commenters by configuring your network to load balance between 2 or more Page and Comment Servers.

Advantages

- Provides a higher capacity for commenters.

Risks and Mitigations

- Under extreme load both the ability to read and comment on blogs will be impaired. To mitigate consider a larger system footprint or separating your page and comment servers.

Standard Footprint

The “standard footprint” for a blogging network is perhaps the smallest and most easily scaled to meet a variety of needs. This architecture extends the variety of 3-machine setups by putting the database on a dedicated server. By so doing, you can more easily scale all of the other components of the system.

Advantages

- Easily scaled by adding additional servers and load balancing between them.

Risks and Mitigations

- For sites with a constant stream of new content, app server and page servers may become taxed under load. To mitigate add dedicated publishing servers to handle page rebuilds.

Standard Footprint with Dedicated Publishing Workers

As a simple extension of the Standard Footprint one can add a machine dedicated to building and rebuilding pages on the page servers. Using this configuration the responsiveness and performance of the publishing front end will be increased as it is no longer tasked with costly I/O operations.

Advantages

- System is optimized to distribute load logically across dedicated server.

- System is simple and intuitive to scale.

Risks and Mitigations

- While load is evenly distributed, specific machines may still be taxed given different usage scenarios. To mitigate, see “How and What to Scale.”

- System has multiple single points of failure. To mitigate add load balancers and redundant servers to handle extra load and to be in place in case of failure or in need of a failover.

Advanced Configuration

Finally one can optimize all the I/O operations by integrating a dedicated file server. In this configuration all of the Publisher Servers write files to the file server via NFS, and the Page Servers read those files via NFS as well.

Clustering and Load Balancing

In the configuration above it is very straight forward to covert any of the above machines into a cluster in order to improve performance and/or redundancy.

Load balancers could then be used in a number of critical connections in order to distribute load, or to detect failures and divert traffic accordingly. Because Movable Type manages session data via a centralized database, Movable Type does not present any specific challenges to load balancers and session management.

The database server as well could be expanded in relatively standard and well documented ways to provide redundancy in the case of failure, disaster recovery, backup, and partitioning to enhance all aspects of the operational integrity of your database.

How and What to Scale

Page Servers — In situations where the availability of content is paramount, it is advisable to have at least two page servers in order to ensure that there is not a single point of failure for content delivery. Should one server fail, the other can easily take on the load.

Comment Servers — Popular and public services will often become a gravity well for comment and TrackBack spam, not to mention all of the feedback contributed legitimately by readers and users. For these systems it is advisable to have a number of dedicated comment servers to process all of the incoming comments and TrackBacks. These servers scale predictably and linearly, so select the correct number of servers based upon your networks usage pattern.

App Servers — For systems with a large number of blogs and bloggers, like large companies and universities, it is important to make sure that the publishing application itself is highly available and very responsive. Therefore, it is advisable to configure a number of different app servers to handle the traffic created by all the publishers and authors.

Publishers — For systems that generate a lot of comments and content, and for systems to wish to maintain a very low latency between the time content is received and the time content is published should invest in a number of machines (or processes) that constantly watching and processing the asynchronous build queue.